Editorial Guide

History of AI Agents

AI agents did not appear overnight in 2025. They emerged from decades of work on reasoning, planning, reinforcement learning, interfaces, and increasingly capable language models. The modern agent is really a stack of earlier ideas finally becoming usable at the same time.

Summary

A narrative timeline of how AI agents evolved from theoretical ideas and brittle demos into real tool-using systems.

Timeline span

1950 to 2026 across 12 featured milestones.

Explore next

Jump into related tags, entity pages, and the full chronology below.

The idea came first

Long before anyone talked about agentic workflows, researchers were already asking what it would mean for machines to perceive, decide, and act. The Turing Test framed intelligence as behavior, ELIZA showed how quickly humans project agency onto software, and Shakey made the concept of a goal-directed robot concrete.

These systems were narrow and fragile, but they introduced the core questions that still define agent design today: what world model does the system have, how does it choose actions, and how much autonomy should it be trusted with?

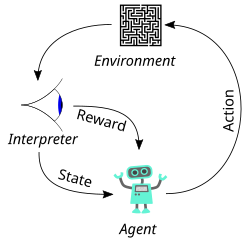

Learning systems made autonomy practical

The next step was not conversational polish but decision-making competence. Reinforcement learning, Atari agents, AlphaGo, and AlphaStar proved that AI could operate over long horizons, learn strategies from feedback, and outperform humans in complex environments.

Those milestones mattered because they made AI feel less like static pattern matching and more like an actor navigating state, uncertainty, and trade-offs. They were proto-agent systems even when they were not yet useful for everyday work.

LLMs turned agents into products

ChatGPT and GPT-4 made it normal to delegate open-ended cognitive work to AI. GPT-4o added fast multimodal interaction, and the 2025 agent wave made the leap from answering questions to using tools, browsing, writing code, and completing multi-step tasks.

That transition is why the agent story is bigger than one product category. It changes the role of software from something you operate directly to something you increasingly supervise, evaluate, and redirect.

Milestone chronology

The essential timeline behind this guide, ordered chronologically.

Turing's 'Computing Machinery and Intelligence'

Alan Turing published his landmark paper in the journal Mind, proposing the 'Imitation Game' (now known as the Turing Test) as a way to evaluate machine intelligence. He asked: 'Can machines think?' and argued the question itself was meaningless — what mattered was whether a machine could convincingly imitate human conversation.

ELIZA: The First Chatbot

Joseph Weizenbaum created ELIZA, a program that simulated a Rogerian psychotherapist using simple pattern matching. Despite being purely rule-based with no understanding, users became emotionally attached to it and insisted it truly understood them — a phenomenon Weizenbaum found deeply disturbing.

Shakey the Robot

Shakey was the first mobile robot that could reason about its actions. It combined computer vision, natural language processing, and planning to navigate rooms, push objects, and solve simple tasks. It used the A* search algorithm and STRIPS planner.

TD-Gammon: Reinforcement Learning Plays Backgammon

Gerald Tesauro created TD-Gammon, a neural network that learned to play backgammon at expert level through self-play using temporal difference reinforcement learning. It discovered novel strategies that surprised human experts.

DeepMind's DQN Masters Atari Games

DeepMind demonstrated a deep reinforcement learning agent (Deep Q-Network) that learned to play Atari 2600 games directly from pixel inputs, achieving superhuman performance on many games with no task-specific engineering. Google acquired DeepMind for ~$500 million shortly after.

AlphaGo Defeats Lee Sedol

DeepMind's AlphaGo defeated Lee Sedol, one of the greatest Go players ever, 4-1 in a five-game match in Seoul. Go has more possible positions than atoms in the universe — brute force was impossible. AlphaGo used deep reinforcement learning and Monte Carlo tree search. In Game 2, AlphaGo played Move 37 — a move so creative that experts called it 'beautiful' and 'not a human move.'

ChatGPT: AI Goes Mainstream

OpenAI released ChatGPT, a conversational AI based on GPT-3.5 fine-tuned with RLHF (Reinforcement Learning from Human Feedback). It reached 1 million users in 5 days and 100 million in 2 months — the fastest-growing consumer application in history. People used it to write emails, debug code, brainstorm ideas, and a thousand other tasks.

GPT-4: Multimodal Intelligence

OpenAI released GPT-4, a multimodal model that could understand both text and images. It passed the bar exam (90th percentile), scored 1410 on the SAT, and demonstrated remarkably nuanced reasoning. It was a massive leap from GPT-3.5 in accuracy, safety, and capability.

GPT-4o: Omni Model

OpenAI released GPT-4o ('omni'), a unified model that natively processed text, audio, images, and video with near-instant response times. It could hold natural voice conversations with emotional expression, sing, laugh, and respond to visual input in real time.

AI Coding Agents Transform Software Development

AI coding agents like Claude Code, Cursor, GitHub Copilot's agentic workflows, and OpenClaw-linked remote coding loops pushed beyond autocomplete into delegated engineering work. These systems could inspect repositories, run tests, edit files, use terminals and browsers, and iterate on tasks over multiple turns.

The Rise of AI Agents

By 2025, frontier models were being wrapped in systems that could browse the web, call tools, edit files, execute code, manage state, and carry multi-step tasks forward with limited supervision. Claude Code, OpenAI's Operator, Google's Project Mariner, OpenClaw, and a wave of agent frameworks turned 'AI agent' from a research label into a practical product category.

AI Agents in the Workforce: March 2026

By March 2026, AI agents were being used in day-to-day operations for coding, research, support, scheduling, and internal automation. Rather than replacing whole teams outright, the clearest pattern was AI taking over narrow but valuable chunks of knowledge work and operating as an always-available teammate inside existing tools and channels.