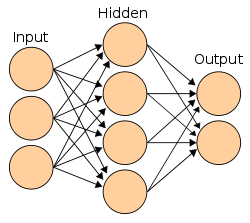

First Mathematical Model of Neural Networks

McCulloch and Pitts published 'A Logical Calculus of Ideas Immanent in Nervous Activity,' creating the first mathematical model of an artificial neuron. They showed that simple binary neurons connected in networks could, in principle, compute any function computable by a Turing machine.