Long Short-Term Memory (LSTM)

What Happened

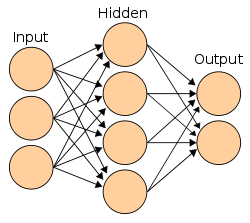

Hochreiter and Schmidhuber published the LSTM architecture, solving the vanishing gradient problem that plagued recurrent neural networks. LSTMs could learn long-range dependencies in sequential data by maintaining a memory cell with gates that controlled information flow.

Why It Mattered

Became the most important recurrent architecture for two decades. Powered speech recognition, machine translation, and text generation until Transformers replaced them. The most-cited neural network paper of the 20th century.

Key People

Organizations

Part of the Quiet Emergence (1994–2005) era · Browse all research breakthroughs · View all 1997 milestones