Nlp

8 milestones in AI history

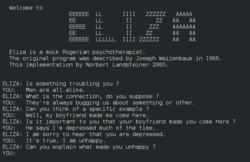

ELIZA: The First Chatbot

Joseph Weizenbaum created ELIZA, a program that simulated a Rogerian psychotherapist using simple pattern matching. Despite being purely rule-based with no understanding, users became emotionally attached to it and insisted it truly understood them — a phenomenon Weizenbaum found deeply disturbing.

SHRDLU: Natural Language Understanding

Terry Winograd created SHRDLU, a program that could understand and respond to English commands about a simulated 'blocks world.' Users could ask it to move objects, answer questions about their arrangement, and even understand pronouns and context within its limited domain.

Long Short-Term Memory (LSTM)

Hochreiter and Schmidhuber published the LSTM architecture, solving the vanishing gradient problem that plagued recurrent neural networks. LSTMs could learn long-range dependencies in sequential data by maintaining a memory cell with gates that controlled information flow.

IBM Watson Wins Jeopardy!

IBM's Watson system defeated the two greatest Jeopardy! champions, Ken Jennings and Brad Rutter, in a televised match. Watson used natural language processing, information retrieval, and machine learning to understand nuanced questions with puns and wordplay.

Apple Launches Siri

Apple introduced Siri as a built-in feature of the iPhone 4S — the first major voice assistant integrated into a mainstream consumer device. Users could ask questions, set reminders, and control their phone with natural speech.

Word2Vec: Words as Vectors

Google researchers published Word2Vec, showing that relatively small neural networks could efficiently learn meaningful vector representations of words from large text corpora. The famous example `king - man + woman ≈ queen` made the idea vivid: semantic relationships could be captured geometrically in vector space.

Attention Is All You Need: The Transformer

Eight researchers at Google published 'Attention Is All You Need,' introducing the Transformer architecture. It replaced recurrence with self-attention mechanisms that could process entire sequences in parallel. The paper's title was deliberately bold — and proved prescient.

BERT: Bidirectional Language Understanding

Google published BERT (Bidirectional Encoder Representations from Transformers), which could understand language context from both directions simultaneously. BERT shattered records on 11 NLP benchmarks. Google integrated it into Search, affecting 10% of all queries.