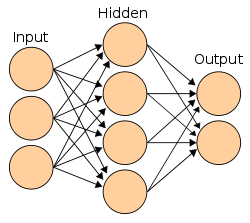

Neural Networks

11 milestones in AI history

First Mathematical Model of Neural Networks

McCulloch and Pitts published 'A Logical Calculus of Ideas Immanent in Nervous Activity,' creating the first mathematical model of an artificial neuron. They showed that simple binary neurons connected in networks could, in principle, compute any function computable by a Turing machine.

The Perceptron

Frank Rosenblatt built the Mark I Perceptron, the first hardware implementation of an artificial neural network. It could learn to classify simple visual patterns. The New York Times reported it as an 'Electronic Brain' that the Navy expected would 'be able to walk, talk, see, write, reproduce itself and be conscious of its existence.'

Perceptrons: The Book That Killed Neural Networks

Minsky and Papert published 'Perceptrons,' mathematically proving that single-layer perceptrons could not solve the XOR problem or other non-linearly separable tasks. While technically correct, the book was widely interpreted as proving neural networks were fundamentally limited — though multi-layer networks could solve these problems.

Backpropagation Discovered (Initially Ignored)

Paul Werbos described the backpropagation algorithm in his PhD thesis — a method for training multi-layer neural networks by propagating errors backward through the network. However, in the anti-neural-network climate of the 1970s, the work went largely unnoticed.

Hopfield Networks: Physics Meets Neural Networks

Physicist John Hopfield showed that a type of recurrent neural network could serve as content-addressable memory, using concepts from statistical physics. The network would converge to stable states that could store and retrieve patterns — connecting neuroscience, physics, and computation.

Backpropagation Rediscovered

Rumelhart, Hinton, and Williams published 'Learning Representations by Back-propagating Errors' in Nature, demonstrating that backpropagation could train multi-layer neural networks effectively. The same year, the PDP (Parallel Distributed Processing) group published their influential two-volume work on connectionism.

NETtalk: Neural Network Learns to Speak

NETtalk was a neural network that learned to pronounce English text aloud, starting from babbling sounds and gradually becoming intelligible — mimicking how a child learns to speak. It captured public imagination and demonstrated backpropagation's potential.

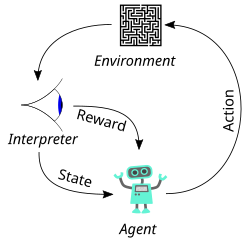

TD-Gammon: Reinforcement Learning Plays Backgammon

Gerald Tesauro created TD-Gammon, a neural network that learned to play backgammon at expert level through self-play using temporal difference reinforcement learning. It discovered novel strategies that surprised human experts.

Long Short-Term Memory (LSTM)

Hochreiter and Schmidhuber published the LSTM architecture, solving the vanishing gradient problem that plagued recurrent neural networks. LSTMs could learn long-range dependencies in sequential data by maintaining a memory cell with gates that controlled information flow.

Deep Belief Networks: Hinton Revives Deep Learning

Geoffrey Hinton published 'A Fast Learning Algorithm for Deep Belief Nets,' showing that deep neural networks could be effectively trained by pre-training each layer as a restricted Boltzmann machine. This solved the long-standing problem of training networks with many layers.

Generative Adversarial Networks (GANs)

Ian Goodfellow introduced GANs — two neural networks (generator and discriminator) competing against each other, one creating fake data and the other trying to detect it. The concept allegedly came to him during a bar conversation. Yann LeCun called GANs 'the most interesting idea in the last 10 years in ML.'