Perceptrons: The Book That Killed Neural Networks

What Happened

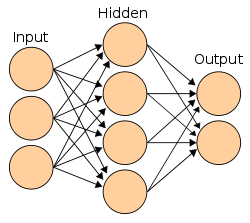

Minsky and Papert published 'Perceptrons,' mathematically proving that single-layer perceptrons could not solve the XOR problem or other non-linearly separable tasks. While technically correct, the book was widely interpreted as proving neural networks were fundamentally limited — though multi-layer networks could solve these problems.

Why It Mattered

Effectively killed neural network research for over a decade. Funding dried up, researchers moved to other approaches. The damage was immense — and the book's conclusions were overgeneralized.

Key People

Organizations

Part of the First AI Winter (1970–1979) era · Browse all research breakthroughs · View all 1969 milestones