Backpropagation Discovered (Initially Ignored)

What Happened

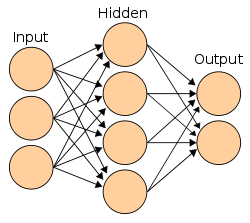

Paul Werbos described the backpropagation algorithm in his PhD thesis — a method for training multi-layer neural networks by propagating errors backward through the network. However, in the anti-neural-network climate of the 1970s, the work went largely unnoticed.

Why It Mattered

Showed that multi-layer neural networks could, in principle, be trained end-to-end. Its long neglect became a cautionary example of how important ideas can stall for years when a field turns against an entire line of research.

Key People

Organizations

Part of the First AI Winter (1970–1979) era · Browse all research breakthroughs · View all 1974 milestones