Hopfield Networks: Physics Meets Neural Networks

What Happened

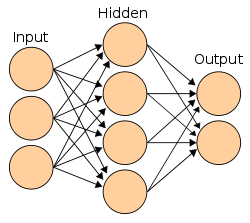

Physicist John Hopfield showed that a type of recurrent neural network could serve as content-addressable memory, using concepts from statistical physics. The network would converge to stable states that could store and retrieve patterns — connecting neuroscience, physics, and computation.

Why It Mattered

Revived serious interest in neural networks among physicists. Made neural networks respectable again in the scientific community. Hopfield was awarded the 2024 Nobel Prize in Physics for this work.

Key People

Organizations

Part of the Expert Systems Boom (1980–1987) era · Browse all research breakthroughs · View all 1982 milestones