Deep Belief Networks: Hinton Revives Deep Learning

What Happened

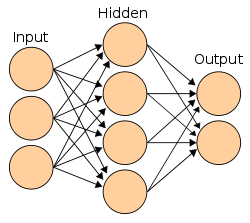

Geoffrey Hinton published 'A Fast Learning Algorithm for Deep Belief Nets,' showing that deep neural networks could be effectively trained by pre-training each layer as a restricted Boltzmann machine. This solved the long-standing problem of training networks with many layers.

Why It Mattered

Reignited the deep learning revolution. Hinton proved that deep networks weren't dead — they just needed better training techniques. This paper is considered the starting gun for modern deep learning.

Organizations

Part of the Deep Learning Dawn (2006–2011) era · Browse all research breakthroughs · View all 2006 milestones