Deep Learning Breakthrough

2012–2017 · 11 milestones

AlexNet shocked the world. Deep learning conquered image recognition, games, and language. The Transformer architecture changed everything.

Milestones

AlexNet: The ImageNet Moment

AlexNet, a deep convolutional neural network, won the ImageNet competition by a staggering margin — reducing the error rate from 26% to 16%. Trained on two NVIDIA GTX 580 GPUs, it was dramatically deeper and more powerful than previous entries. The AI community was stunned.

Word2Vec: Words as Vectors

Google researchers published Word2Vec, showing that relatively small neural networks could efficiently learn meaningful vector representations of words from large text corpora. The famous example `king - man + woman ≈ queen` made the idea vivid: semantic relationships could be captured geometrically in vector space.

DeepMind's DQN Masters Atari Games

DeepMind demonstrated a deep reinforcement learning agent (Deep Q-Network) that learned to play Atari 2600 games directly from pixel inputs, achieving superhuman performance on many games with no task-specific engineering. Google acquired DeepMind for ~$500 million shortly after.

Generative Adversarial Networks (GANs)

Ian Goodfellow introduced GANs — two neural networks (generator and discriminator) competing against each other, one creating fake data and the other trying to detect it. The concept allegedly came to him during a bar conversation. Yann LeCun called GANs 'the most interesting idea in the last 10 years in ML.'

Amazon Echo & Alexa

Amazon launched the Echo smart speaker with Alexa voice assistant, creating an entirely new product category. Alexa could play music, control smart home devices, answer questions, and run third-party 'skills.' It brought always-on AI into the living room.

OpenAI Founded

OpenAI was founded as a non-profit AI research lab with $1 billion in committed funding, aiming to ensure artificial general intelligence benefits all of humanity. Co-founded by Sam Altman (Y Combinator president), Elon Musk, and top researchers including Ilya Sutskever from Google Brain.

ResNet: Deeper Than Ever

Microsoft Research introduced ResNet with skip connections (residual connections), enabling the training of networks with 152+ layers — 8x deeper than previous networks. ResNet won ImageNet 2015 with 3.57% error, surpassing human-level performance (5.1%) for the first time.

TensorFlow Open-Sourced

Google open-sourced TensorFlow, its internal machine learning framework. This gave every researcher and developer access to the same tools Google used internally. PyTorch (Facebook, 2016) followed, creating a healthy competition that accelerated the entire field.

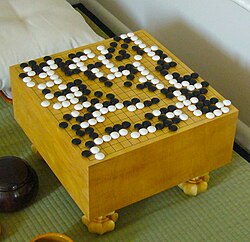

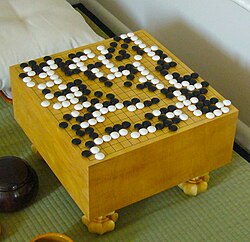

AlphaGo Defeats Lee Sedol

DeepMind's AlphaGo defeated Lee Sedol, one of the greatest Go players ever, 4-1 in a five-game match in Seoul. Go has more possible positions than atoms in the universe — brute force was impossible. AlphaGo used deep reinforcement learning and Monte Carlo tree search. In Game 2, AlphaGo played Move 37 — a move so creative that experts called it 'beautiful' and 'not a human move.'

Attention Is All You Need: The Transformer

Eight researchers at Google published 'Attention Is All You Need,' introducing the Transformer architecture. It replaced recurrence with self-attention mechanisms that could process entire sequences in parallel. The paper's title was deliberately bold — and proved prescient.

AlphaGo Zero: Learning From Scratch

AlphaGo Zero achieved superhuman Go performance with ZERO human knowledge — no training data from human games, no hand-crafted features. It learned entirely through self-play, and within 40 days surpassed all previous versions, including the one that beat Lee Sedol.