Deepmind

5 milestones in AI history

DeepMind's DQN Masters Atari Games

DeepMind demonstrated a deep reinforcement learning agent (Deep Q-Network) that learned to play Atari 2600 games directly from pixel inputs, achieving superhuman performance on many games with no task-specific engineering. Google acquired DeepMind for ~$500 million shortly after.

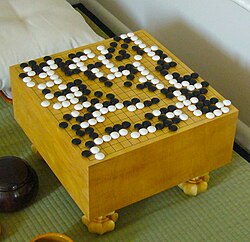

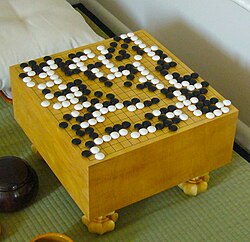

AlphaGo Defeats Lee Sedol

DeepMind's AlphaGo defeated Lee Sedol, one of the greatest Go players ever, 4-1 in a five-game match in Seoul. Go has more possible positions than atoms in the universe — brute force was impossible. AlphaGo used deep reinforcement learning and Monte Carlo tree search. In Game 2, AlphaGo played Move 37 — a move so creative that experts called it 'beautiful' and 'not a human move.'

AlphaGo Zero: Learning From Scratch

AlphaGo Zero achieved superhuman Go performance with ZERO human knowledge — no training data from human games, no hand-crafted features. It learned entirely through self-play, and within 40 days surpassed all previous versions, including the one that beat Lee Sedol.

AlphaStar Masters StarCraft II

DeepMind's AlphaStar reached Grandmaster level in StarCraft II, a real-time strategy game requiring long-term planning, deception, and split-second tactics with incomplete information — far more complex than Go or chess.

AlphaFold 2: Protein Folding Solved

DeepMind's AlphaFold 2 solved the 50-year-old protein structure prediction problem, achieving accuracy comparable to experimental methods at CASP14. It could predict how proteins fold from their amino acid sequences — a problem that had stumped biologists for half a century.