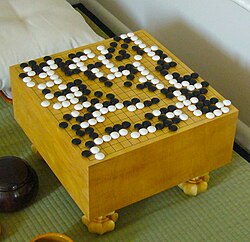

AlphaGo Zero: Learning From Scratch

What Happened

AlphaGo Zero achieved superhuman Go performance with ZERO human knowledge — no training data from human games, no hand-crafted features. It learned entirely through self-play, and within 40 days surpassed all previous versions, including the one that beat Lee Sedol.

Why It Mattered

Demonstrated that AI could surpass all human knowledge in a domain starting from nothing. Raised profound questions about the nature of human expertise and whether AI could discover strategies humans never imagined.

Key People

Organizations

Part of the Deep Learning Breakthrough (2012–2017) era · Browse all research breakthroughs · View all 2017 milestones