Editorial Guide

Most Important AI Milestones

Not every milestone matters in the same way. Some changed the technical direction of the field, some reoriented public expectations, and some proved that a previously speculative future had already arrived. This page focuses on the moments that genuinely bent the curve of AI history.

Summary

A curated shortlist of the AI milestones that most changed the direction, pace, or public meaning of the field.

Timeline span

1950 to 2025 across 9 featured milestones.

Explore next

Jump into related tags, entity pages, and the full chronology below.

Foundations that still define the field

AI history starts with a small set of ideas that never really disappeared. Turing framed machine intelligence as observable behavior, Dartmouth turned a loose set of questions into a named discipline, and early neural-network work established the lineage that modern deep learning still follows.

These milestones remain important because nearly every later breakthrough can be read as a stronger answer to the same early questions: can machines reason, learn, generalize, and interact with the world in useful ways?

The modern acceleration points

AlexNet, the Transformer, and ChatGPT are the clearest acceleration points in modern AI history. AlexNet proved deep learning could dominate at scale, the Transformer created the architecture stack behind today’s frontier systems, and ChatGPT changed adoption from expert awareness to public ubiquity.

If you want the shortest explanation for why AI feels different now than it did a decade ago, this is the sequence to study. It captures the move from promising research to mass-use software.

Why the current moment feels different

The latest milestones are important not only because models are stronger, but because the surrounding product patterns are changing. GPT-4 normalized high-stakes reasoning, and the rise of AI agents shifted attention toward systems that can plan, use tools, and execute work across steps.

That is why today’s milestones feel less like isolated demos and more like infrastructure for a new computing model. The field is no longer just producing better outputs; it is producing systems that increasingly participate in workflows.

Milestone chronology

The essential timeline behind this guide, ordered chronologically.

Turing's 'Computing Machinery and Intelligence'

Alan Turing published his landmark paper in the journal Mind, proposing the 'Imitation Game' (now known as the Turing Test) as a way to evaluate machine intelligence. He asked: 'Can machines think?' and argued the question itself was meaningless — what mattered was whether a machine could convincingly imitate human conversation.

The Dartmouth Conference

A two-month workshop at Dartmouth College where the term 'Artificial Intelligence' was officially coined. The proposal stated: 'Every aspect of learning or any other feature of intelligence can in principle be so precisely described that a machine can be made to simulate it.' This gathering brought together the founders of the field.

Backpropagation Rediscovered

Rumelhart, Hinton, and Williams published 'Learning Representations by Back-propagating Errors' in Nature, demonstrating that backpropagation could train multi-layer neural networks effectively. The same year, the PDP (Parallel Distributed Processing) group published their influential two-volume work on connectionism.

AlexNet: The ImageNet Moment

AlexNet, a deep convolutional neural network, won the ImageNet competition by a staggering margin — reducing the error rate from 26% to 16%. Trained on two NVIDIA GTX 580 GPUs, it was dramatically deeper and more powerful than previous entries. The AI community was stunned.

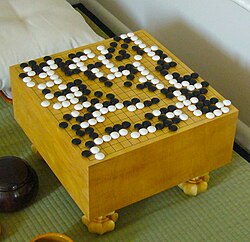

AlphaGo Defeats Lee Sedol

DeepMind's AlphaGo defeated Lee Sedol, one of the greatest Go players ever, 4-1 in a five-game match in Seoul. Go has more possible positions than atoms in the universe — brute force was impossible. AlphaGo used deep reinforcement learning and Monte Carlo tree search. In Game 2, AlphaGo played Move 37 — a move so creative that experts called it 'beautiful' and 'not a human move.'

Attention Is All You Need: The Transformer

Eight researchers at Google published 'Attention Is All You Need,' introducing the Transformer architecture. It replaced recurrence with self-attention mechanisms that could process entire sequences in parallel. The paper's title was deliberately bold — and proved prescient.

ChatGPT: AI Goes Mainstream

OpenAI released ChatGPT, a conversational AI based on GPT-3.5 fine-tuned with RLHF (Reinforcement Learning from Human Feedback). It reached 1 million users in 5 days and 100 million in 2 months — the fastest-growing consumer application in history. People used it to write emails, debug code, brainstorm ideas, and a thousand other tasks.

GPT-4: Multimodal Intelligence

OpenAI released GPT-4, a multimodal model that could understand both text and images. It passed the bar exam (90th percentile), scored 1410 on the SAT, and demonstrated remarkably nuanced reasoning. It was a massive leap from GPT-3.5 in accuracy, safety, and capability.

The Rise of AI Agents

By 2025, frontier models were being wrapped in systems that could browse the web, call tools, edit files, execute code, manage state, and carry multi-step tasks forward with limited supervision. Claude Code, OpenAI's Operator, Google's Project Mariner, OpenClaw, and a wave of agent frameworks turned 'AI agent' from a research label into a practical product category.